Logrotate Woes

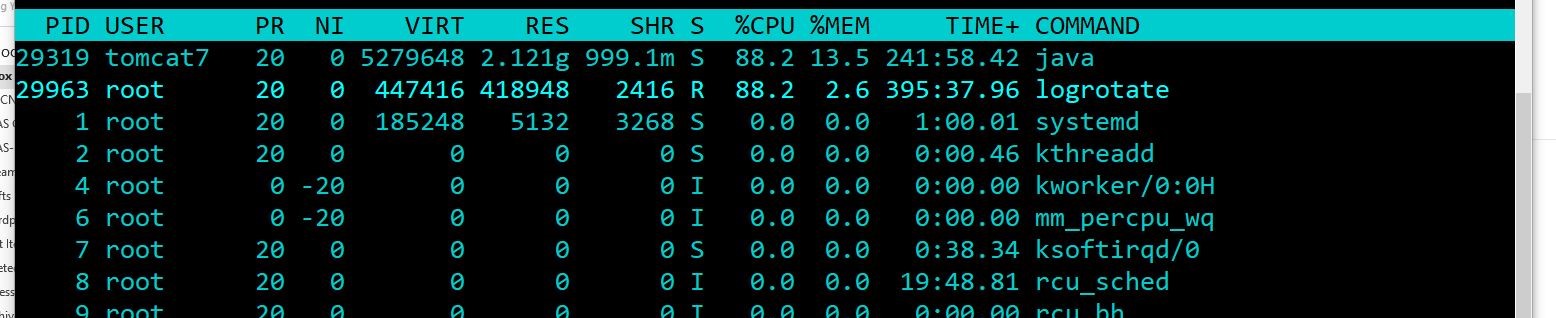

Logrotate is a fabulous tool and is in no way the source of my woe - that’s all me, I am afraid! In my youthful exurberance, I had made a silly mistake where rather than specify that I wanted to rotate files ending in “.log”, I had written ““. This caused it to use up huge amounts of CPU and get into a tizz, continually rotating like a spinning top (groan). In my case, I ended up with about 2.8 million files created over a few weeks and a CPU topping 90% at times.

I am sure I am not the only one but I was really grateful to read this post so do check that out if you are in the same boat.

It goes into lots of detail about one way (possibly the best way), to resolve the issue and remove the many files that are produced. That’s not what this post is about though, not because I like to perform sub-optimal actions (haha) but that in my case, I wanted to remove the files without stopping the service that was producing them. There are many ways to crack a nut, so to speak, but in this case, I produced a small bash script which would run through a file containing filenames and delete them one by one. If that sounds like something you would like to do, read on!

Creating the source file

Start by running the following command to produce our file of filenames

1 | > ls > files.txt |

The Script

Next we need to create our script. Type this into a file called delete-files.sh and save it.

1 |

|

Now add the execute bit.

1 | > chmod +x delete-files.sh |

Running the Script

Now you could just run this immediately like so:

1 | > ./delete-files.sh files.txt |

BUT! Bear in mind that this itself is going to be really resource intensive. Moreover, if it takes ages, what do you do? You can’t leave this unattended and log out or it will stop. Let’s slow it down a little and do something a little more permissive.

1 | root@host:/var/log/tomcat7# nohup ./delete-files.sh files.txt & |

What we are basically doing is running the command in the background but in such a way that when we log out, it will continue chugging along. We then take the process ID of that running task (30070) and renice it, ensuring it is not so greedy.

Examining the Innards

Let’s talk a little about how the script works in case you want to alter it.

1 | while IFS='' read -r line || [[ -n "$line" ]]; do |

Keep reading the lines from the standard input (you will see how that works in a minute) and continue whilst a whole line (with carriage return) can be read OR there is at least something

1 | read ([[ -n "$line" ]]). |

Read any input from the first parameter on the command line. The rest of the script is pretty straight-forward. Consider removing the comment from the line which echoes what is being deleted but be aware that it will slow it down quite a bit. Anyway - I’d better go; I’ve got some files to remove.

Hi! Did you find this useful or interesting? I have an email list coming soon, but in the meantime, if you ready anything you fancy chatting about, I would love to hear from you. You can contact me here or at stephen ‘at’ logicalmoon.com